Have you ever wondered what is the key to optimizing your business strategies? We give you the answer: the key is in the control of the data.

Have you ever wondered what is the key to optimizing your business strategies? We give you the answer: the key is in the control of the data.

Most companies store large amounts of information on different platforms, such as ERP, CRM, spreadsheets ... This causes the loss of data traceability, so the organization can not get a global view that allows it to make better strategic decisions.

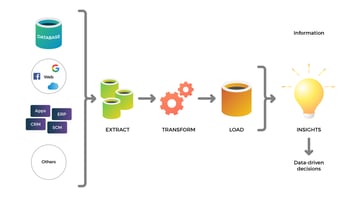

Currently, storing data is not enough for the organization to have greater competition, but it is necessary that the data are integrated in a single place so that they cease to be a cost to become a business asset. To achieve this, data integration is key and the organization must carry out an ETL process.

What Is an ETL Process?

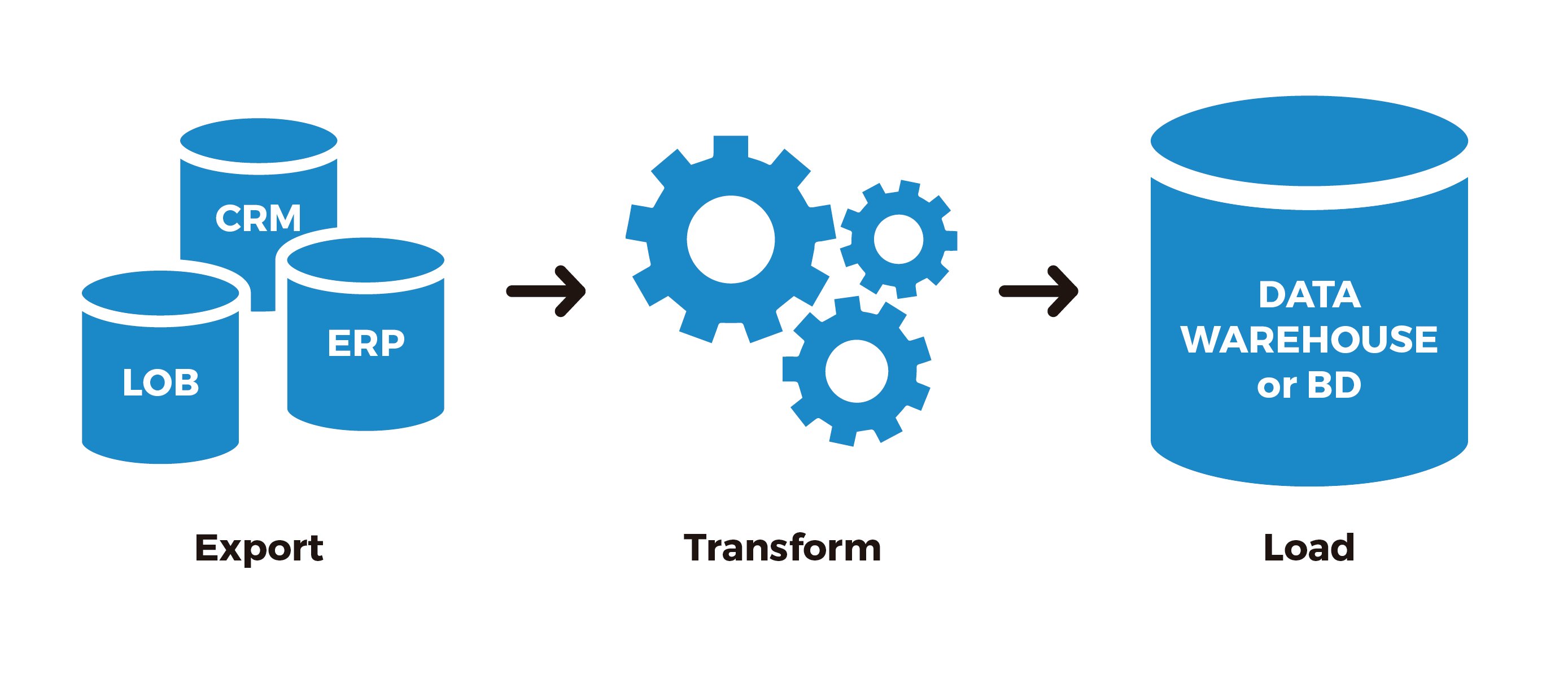

Briefly explained, an ETL process (Extract, Transform, Load) is a system that allows organizations to move data from multiple sources (ERP, CRM, Excel, Open Data, Internet Of Things, Social Networks ...) to integrate them into a single place, which could be a database, a data warehouse, and so on.

What Is a Data Warehouse?

If you haven't yet, read our article about Data Warehouses that covers the topic extensively. In short, a data warehouse is the place where we store business information from multiple sources for consultation and analysis, that is, it transforms the data into information becoming the core of the BI system (business intelligence). Thanks to this, for example, an organization can analyze its customers with all available information.

In turn, ETL stands for Extract, Transform and Load, which represent the three phases of the process that are carried out for the correct integration of the data and which we describe below.

What are the stages of an ETL process?

- Data extraction process: this is the first stage of ETL, where the data located in the different company repositories are extracted. In this step, the data is converted into a single format and prepared for transformation. Once we have all the data collected and raw, we have to do a treatment of cleaning or purification that will help us avoid mistakes that dirty them.

- Data transformation process: transforms the data into the structure we have defined in our data warehouse. The transformation step includes validation actions on business rules, technical validations (duplicates, integrity, nulls...), code normalization and homogenization, format changes, as well as sorting, filtering, crosses and aggregates.

- Data loading: the loading phase is the last one of the process, the transformed data begin to be loaded in the data warehouse where they are registered, they can be audited and they always have a history over time.

Due to the complexity of the ETL process and depending on the characteristics of each organization we can find several types of processing.

What are the types of processing in ETL tools?

- Data: a file is divided into small files so that it can be processed in parallel.

- Pipeline (segmentation): allows the simultaneous operation of several components within the same file. For example, you could be working on column 1 of a file at the same time you are working on the second and third, giving much more speed.

- Component: simultaneous operation of multiple processes in different data streams at the same workstation.

One of the most important aspects when undertaking an ETL process is the choice of the ETL tool, as it will depend on the size of the organization, the cost and the location of the storage.

Main ETL tools

When choosing an ETL (Extract, Transform, Load) tool, it is essential to consider several factors to ensure that the tool fits the specific needs and requirements of your organisation

1. Enterprise Software

Used by companies that have a larger size, higher cost compared to other options available. Examples: Oracle Data Integrator, SAP Data Services, IBM Infosphere DataStage, SAS Data Manager, Microsoft SQL Server Integration Services - SSIS.

2. Custom ETL Programming

Companies that develop their own tools in order to have greater flexibility. Examples of languages used for this programming: Java, .Net, Python, etc.

3. Open Source

Free open source tools for all users. Examples: Pentaho Data Integration, Talend Open Studio.

4. Cloud Service

Tools from Google, Microsoft or Amazon that have their own ETL services in the Cloud. Examples: Amazon AWS Glue, Microsoft Azure Data Factory, Google Cloud Dataflow, Amazon AWS EMR.

Similarly, to choose the type of tool most accurately, we recommend following the instructions of Gartner, a leading IT consultant.

Characteristics that our ETL tool should have according to Gartner

- Connectivity/adaptive capabilities (with support for data sources and destinations): ability to connect to a wide range of data structure types, including relational and non-relational databases, various file formats, XML, ERP, CRM or SCM applications, standard message formats (EDI, SWIFT or HL7), message queues, emails, websites, content repositories or office automation tools.

- Data delivery capabilities: ability to deliver data to other applications, processes or databases in various forms, with capabilities for batch, real-time or event triggered process scheduling.

- Data transformation capabilities: ability to transform data, from basic transformations (type conversion, string manipulation or simple calculations), intermediate transformations (aggregations, summarizations, lookups) to complex transformations such as free text analysis or rich text.

- Metadata capabilities and data modeling: recovery of data models from data sources or applications, creation and maintenance of data models, mapping from physical model to logical model, open metadata repository (with the possibility of interacting with other tools), synchronization of metadata changes in the different components of the tool, documentation, etc.

- Design capabilities and development environment: graphical representation of repository objects, data models and data flows, test and debugging support, teamwork capabilities, workflow management of development processes, etc.

- Data management capabilities (data quality, profiles and mining).

- Adaptation to the different hardware platforms and existing operating systems: Mainframes (IBM Z/OS), AS/400, HP Tandem, Unix, Wintel, Linux, Virtualized Servers, etc.

- Operations and administration capabilities: skills for management, monitoring and control of data integration processes, such as error management, collection of execution statistics, security controls, etc.

- Architecture and integration: degree of compactness, consistency and interoperability of the different components that make up the data integration tool (with a desirable minimum number of products, a single repository, a common development environment, interoperability with other tools or via API), etc.

When is it necessary to use an ETL process in my company?

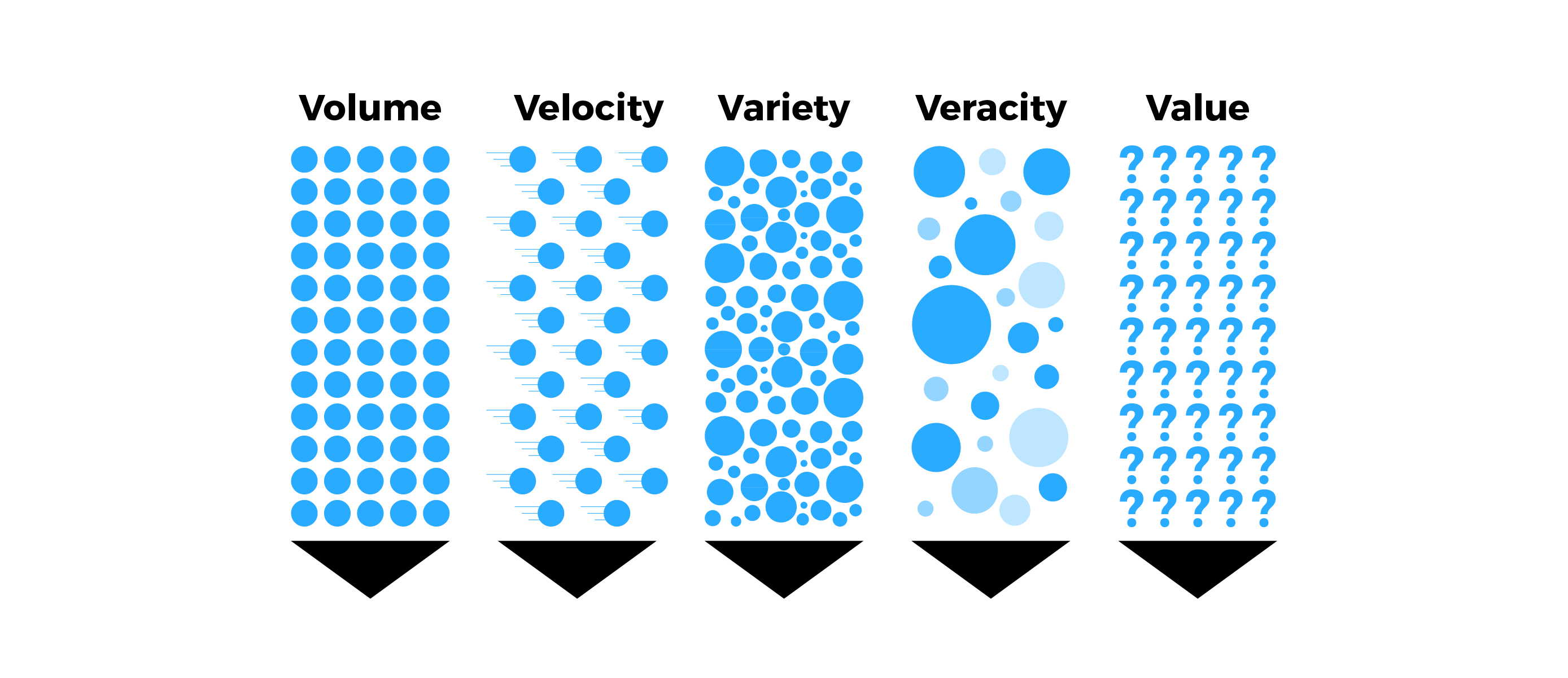

An ETL process helps companies create a support system for critical decision making and allows business managers to quickly access data in one place.

In addition, it provides clean and filtered data structures for exploitation by different end-user tools, increases data quality and value, and enables decision optimization. Transactional databases cannot answer complex business questions that can be answered thanks to ETL.

Another important reason is that the data warehouse is automatically updated. The ETL model offers a deep historical context for the business, as it collects a large amount of historical data for the analysis of different time periods, to discover trends and make future predictions. The data warehouse integrates many data sources to reduce processing in the production system and allows to reduce the total response time in analysis and report generation, while facilitating its preparation.

What is the difference between ETL and ELT?

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) are two different approaches used in the process of data integration and data preparation. Both are used to move data from various sources into a data warehouse for analysis. The main difference between ETL and ELT lies in the order in which the data transformation steps are carried out.

Main differences between ETL and ELT:

- In ETL, the transformation stage is performed before loading the data into the data warehouse, while in ELT, the transformation stage is performed after loading the data into the data warehouse.

- ETL focuses on transforming data out of the data warehouse, which implies that the transformed data is stored in the data warehouse in an analysis-ready format. In contrast, ELT loads raw data into the data warehouse and allows transformations to be performed in the data warehouse itself.

- ELT offers greater flexibility in terms of performing real-time transformations and leveraging the processing capabilities of the data warehouse, while ETL may be more suitable for complex transformations and intensive cleansing processes before loading the data into the data warehouse.

The choice between ETL and ELT depends on the specific requirements of each project, the architecture of the data warehouse and the complexity of the transformations needed to prepare the data for analysis and reporting.

Conclusion

In short, the application of the ETL process is the construction and loading of a data warehouse in order to obtain a consolidated view of the data that drives better strategic decisions. Therefore, companies with defined processes for data storage in addition to the development of BI technologies achieve, apart from saving costs, added revenue through the monetization of their files. For example, for the owner of a hotel who needs to collect statistical information on average occupancy and room rate, we can, thanks to the ETL process and BI tools, find the aggregate revenue generated per room, collect market share statistics and customer survey data from each hotel to decide its competitive position in various markets. Similarly, we can analyze trends year after year, month after month, and day after day to offer discounts on room reservations.