What is Azure Synapse? What is the difference between Azure Synapse Analytics and SQL or Databricks?

In November 2019 Microsoft announced the launch of Azure Synapse. What is it and how does it relate to the Redmond company's other data analysis services? How has Azure Synapse Analytics changed since its first launch?

Azure Synapse Analytics is an unlimited information analysis service aimed at large companies that was presented as the evolution of Azure SQL Data Warehouse (SQL DW), bringing together business data storage and macro or Big Data analysis.

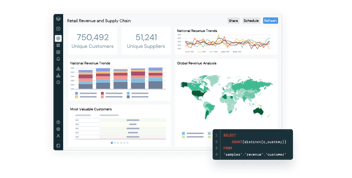

Synapse provides a single service for all workloads when processing, managing and serving data for immediate business intelligence and data prediction needs. The latter is made possible by its integration with Power BI and Azure Machine Learning, due to Synapse's ability to integrate mathematical machine learning models using the ONNX format. It provides the freedom to handle and query huge amounts of information either on demand serverless (a type of deployment that automatically scales power on demand when large amounts of data are available) for data exploration and ad hoc analysis, or with provisioned resources, at scale.

Do you work regularly with Power BI? At Bismart, as a Microsoft Power BI partner, we try to keep you informed of the best practices and all the news related to this set of services.

We have recently created an e-book en which we explain how to build a self-service BI strategy using Power BI. Download it now! It's free!

What is Azure Synapse?

Azure Synapse Analytics is a powerful tool offered by Microsoft that revolutionizes data analysis for large companies. It is a comprehensive service that combines business data storage and macro or Big Data analysis, making it an evolution of Azure SQL Data Warehouse. With its integration with Power BI and Azure Machine Learning, Synapse provides a unified platform for processing, managing, and serving data for immediate business intelligence and data prediction needs.

What sets Azure Synapse apart is its ability to handle and query massive amounts of information using either serverless deployment for data exploration and ad hoc analysis or provisioned resources for scaling. It supports several programming languages like SQL, Python, .NET, Java, Scala, and R, making it suitable for different analysis workloads and engineering profiles.

With its seamless integration with Azure Databricks, Azure Synapse enables users to continue utilizing Apache Spark for Big Data processing while benefiting from a specialized data architecture for extract, transform, and load (ETL) workloads. Azure Synapse Analytics is a game-changer for companies seeking to streamline their data analytics processes, reduce development times, and achieve successful data insights in milliseconds.

How does Azure Synapse Analytics work?

Microsoft's service is a SaaS (Software as a Service), and can be used on demand to run only when needed (which has an impact on cost savings). It has four components:

- SQL Analytics with full T-SQL based analysis: SQL Cluster (pay per unit of computation) and SQL on demand (pay per TB processed).

- Apache Spark fully integrated.

- Connectors with multiple data sources.

Azure Synapse uses Azure Data Lake Storage Gen2 as a data warehouse and a consistent data model that incorporates administration, monitoring and metadata management sections. In the security area, it allows you to protect, monitor, and manage your data and analysis solutions, for example using single sign-on and Azure Active Directory integration. Basically, Azure Synapse completes the whole data integration and ETL process and is much more than a normal database since it includes further stages of the process giving the users the possibility to also create reports and visualizations.

In terms of programming language support, it offers a choice of several languages such as SQL, Python, .NET, Java, Scala and R. This makes it highly suitable for different analysis workloads and different engineering profiles.

Everything is encompassed within the Synapse Analytics Studio that makes it easy to integrate Artificial Intelligence, Machine Learning, IoT, intelligent applications or business intelligence, all within the same unified platform.

Azure Synapse with T-SQL and Spark

With regard to the execution times, it allows for two engines. On one hand the traditional SQL engine (T-SQL) and on the other hand the Spark engine. This way it is possible to use T-SQL, for example, for batch, streaming and interactive processing, or Spark when Big Data processing with Python, Scala, R or .NET is required.

Here it links directly to Azure Databricks, the Apache Spark-based artificial intelligence and macrodata analysis service that allows automatic scalability and collaboration on shared projects in an interactive workspace. Azure Synapse provides a high performance connector between both services enabling fast data transfer. This means that it is possible to continue using Azure Databricks (an optimization of Apache Spark) with a data architecture specialized in extract, transform and load (ETL) workloads to prepare and shape data at scale. In turn, Azure Synapse and Azure Databricks can run analyses on the same data in Azure Data Lake Storage.

Azure Synapse and Azure Databricks provide us with even greater opportunities to combine analytical, business intelligence and data science solutions with a shared Data Lake between services.

On the Road to Maximum Compatibility and Power

Initially, the Microsoft service is presented as a solution to two fundamental problems that companies must face. The first of these is compatibility. The data analysis system that it integrates has the ability to work with both traditional systems and unstructured data and various data sources. It is thus able to analyze data stored in systems such as customer databases (with names and addresses located in rows and columns arranged like a spreadsheet) and also with data stored in a Data Lake in parquet format.

But it also provides greater versatility in automatically handling tasks to build a system for data analytics. This increased power has the direct consequence of reducing the amount of work needed by programmers, and by extension project development times (it is the first and only analysis system that has executed all TPC-H queries at petabyte scale).

Azure Synapse Analytics main advantadges

Azure Synapse achieves that projects that take several months can be completed in days, or complex database queries that take minutes or hours now take a few seconds.

1. Successful queries in milliseconds

In addition to scaling process and storage resources separately, Azure Synapse Analytics stands out for its result caching capability (it has a fully managed 1 TB cache). Thus, when a query is made it is stored in this cache to speed up the next query that consumes the same type of data.

This is one of the keys to it being able to throw responses in milliseconds. This is because the cache survives pause, resume and scale operations (which can be activated very quickly by a massive parallel processing architecture designed for the cloud).

2. Workloads and performance

Also noteworthy is its full support for JSON, data masking to ensure high levels of security, support for SSDT (SQL Server Data Tools) and especially workload management and how it can be optimized and isolated. Here multiple workloads share implemented resources. This makes it possible to create a workload and assign the amount of CPU and concurrency to it.

In the case of having for example 1000 DWU (Data Warehouse Units), Azure Synapse facilitates the operation of assigning for example a percentage of work to sales and another to marketing (for example 60% to one and 40% to the other). The idea is to facilitate administration and prioritize database queries.

In terms of data preparation and ingestion, it supports streaming in an integrated manner (Native SQL Streaming) to generate analyses, for example with integration with Event Hub or an IoT Hub. And it achieves this by achieving high performance of up to 200MB/second, delivery latencies in seconds, ingest performance scales with computing scale, and analysis capabilities with Microsoft SQL-based queries for combinations, aggregations, filters...

3. Azure Synapse: Additional features

Finally, we cannot finish without highlighting other interesting aspects of Azure Synapse Analytics that help speed up data loading and facilitate processes. Among them are:

- For data preparation and loads, the Copy order makes external tables no longer necessary, since it allows you to load tables directly into the database.

- It offers full support for standard CSV: line breaks and custom delimiters and SQL dates.

- Provides user-controlled file selection (wildcard support)

- Machine Learning support: Machine Learning models can be created and saved in ONNX format, which are stored within the Azure Synapse data store and used with the native PREDICT instruction.

- Integration with Data Lake: from Azure Synapse, files are read in the Data Lake in Parquet format, which achieves a much higher performance improving Polybase execution over 13x.

In short, a service that guarantees the development line to ensure SQL DW customers can continue running existing data storage workloads in production and automatically benefit from new features.

Before you go...

Don't miss our free guide on how to foster a self-service BI strategy using Power BI!